The scientific method is founded on making and testing hypotheses

Evaluation is just another name for testing

Our hypotheses are often about differences

So, we often look at a number of trials

And ask whether the results are different or not

But what counts as different?

So we're going to be measuring things

And comparing (sets of) measurements

Each task we look at will have its own appropriate measurements

And thus each comparison will be in its own terms

But one issue will be present throughout: are the differences we find significant?

Here's a simple example

Answer, in fact: 'No'

Percentages can be the wrong basis for comparing outcomes if the population is of different sizes

Significance measurement can be complex to understand, but the basic idea is simple:

Standard deviation is essentially a measure of how representative the mean is

Definitions:

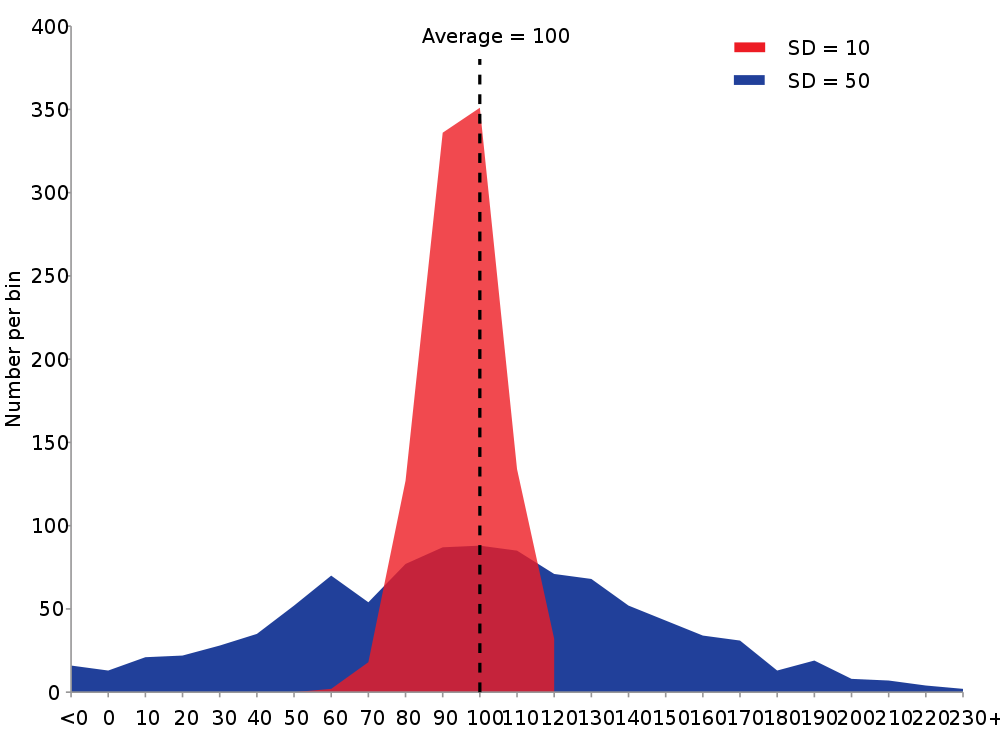

Different standard deviation means different representativeness or reliability for the means

In the blue case, some items are a long way from the mean

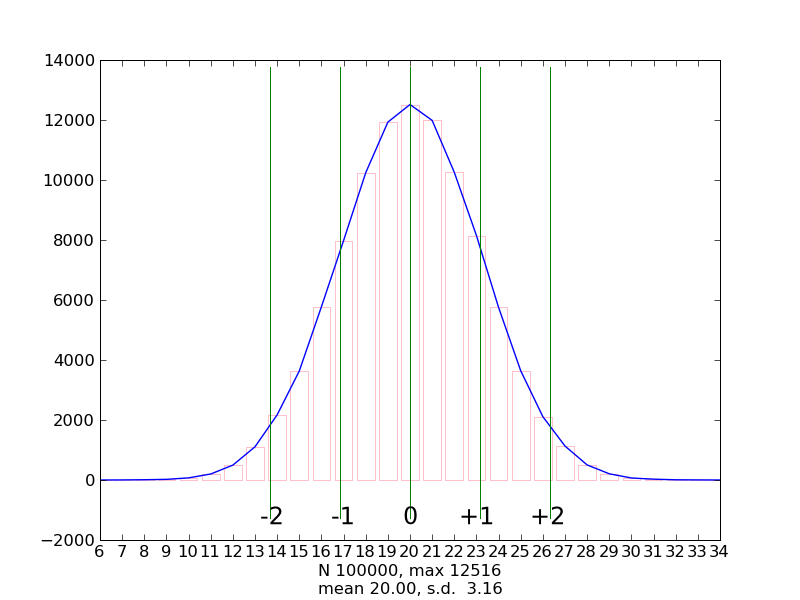

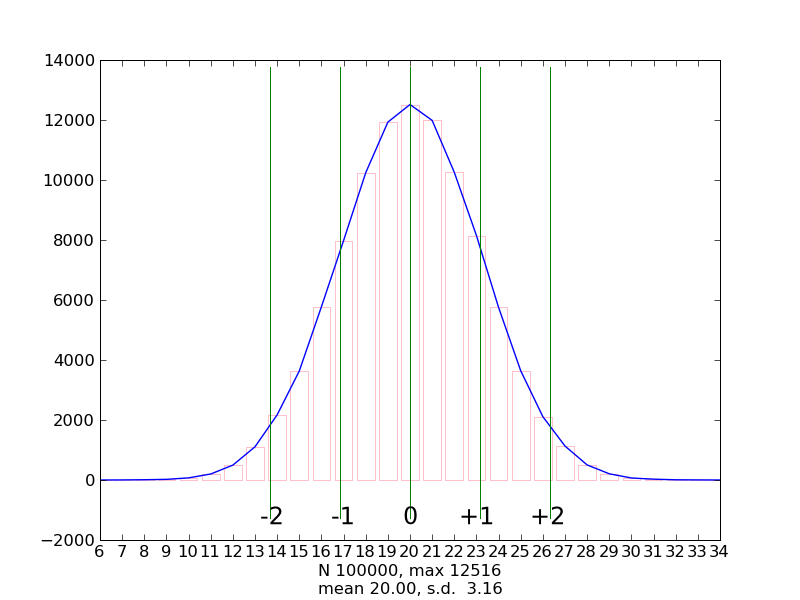

If we look at the distribution of outcomes over many coin-toss trials, it looks like this:

That's a classic normal distribution

The peak is at 50% heads, but there are lots of other plausible outcomes.

It looks like most of the results are within 2 standard deviations.

This is true by definition for any true normal distribution

Consider my earlier claim that 13 heads is probably a sign of an unfair coin

The graph tells us that 13 is outside the 2 standard deviation boundary

So there's only about a 4% chance that a coin which gives 13 heads is fair

"about 4%" doesn't seem a crisp way to report on an experiment

By convention, we say a result is significant if the chance is 5%

So, 2 standard deviations is not quite the right boundary

So a result outside the 1.96 boundary will come up once in 20 trials, even if the coin is fair.

So we say that a result outside that boundary is significant "p < .05"

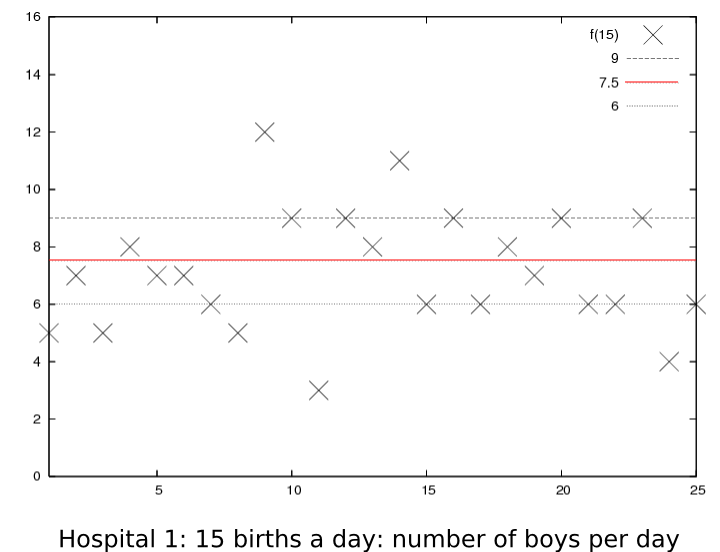

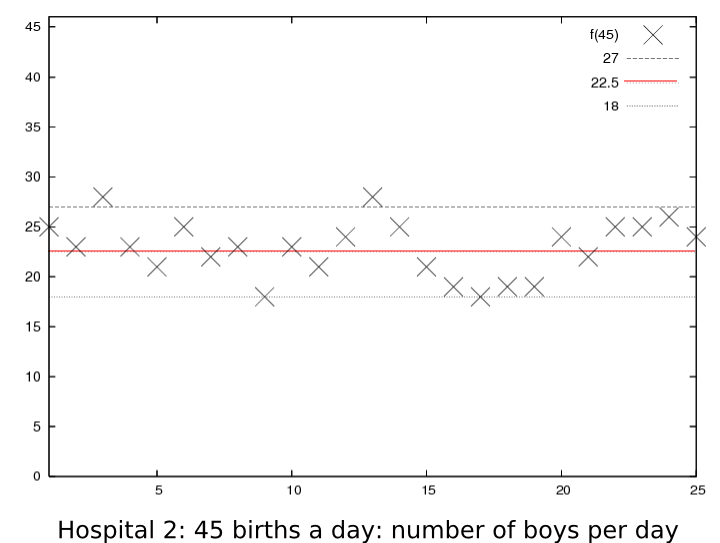

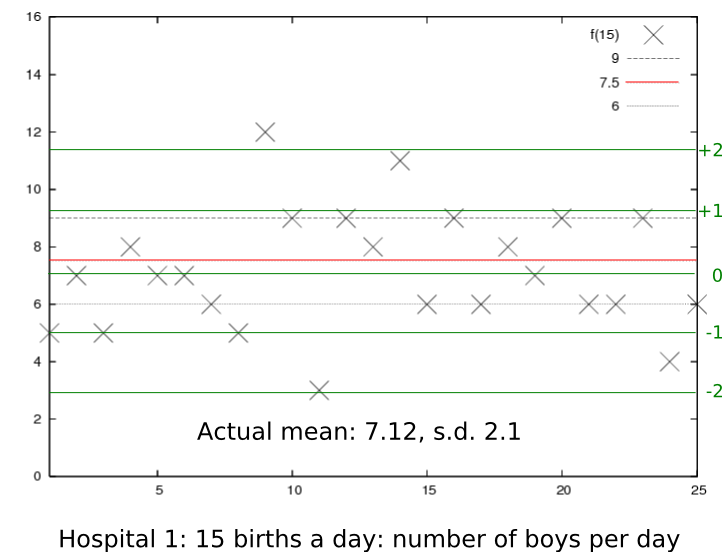

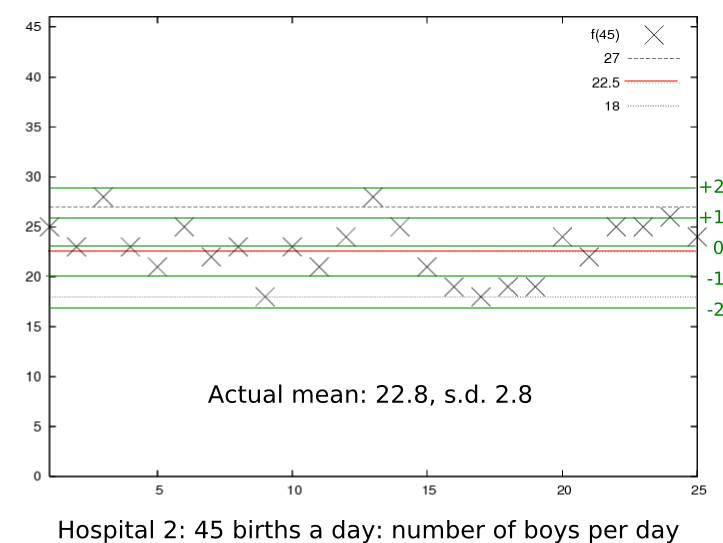

We can look at the hospital data again

Now they don't look so different

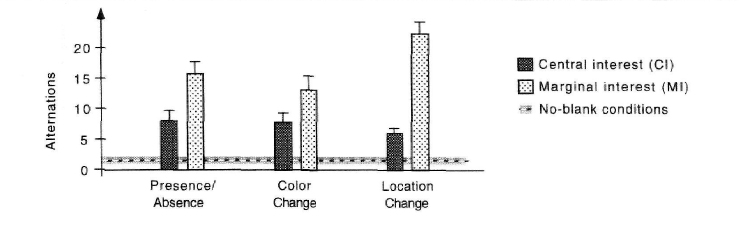

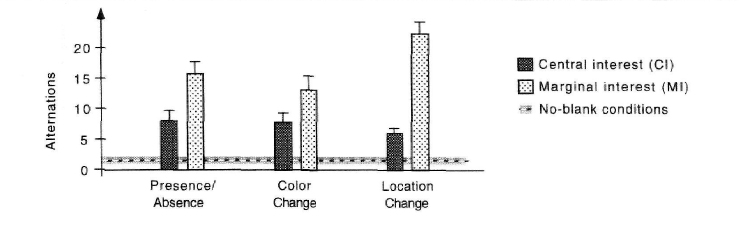

We looked at the Rensink et al (1997) change blindness paper

One of the independent variables in this flicker paradigm experiment was the location of the change

The authors compared the number of image alternations (reaction time) which participants required to detect three different types of change (presence/absence, colour, location) in central versus marginal areas.

They report:

"...within each type of change, perception of Ml changes took significantly longer than perception of CI changes (p < .001 for presence vs. absence; p < .05 for colour; p < .001 for location), even though MI changes were on average more than 20% larger in area."

Based on a standard alpha-level of 0.05, all three comparisons are statistically significant.

So what they're saying, for example with respect to the difference in the detection times of colour differences

The authors repeated the same experiment, removing the blank "masking" slides between the original and changed images in order to confirm that participants physically could see the changes.

Now it required an average of just less than 1 second to see the changes!

The authors report:

"A completely different pattern of results emerged...No significant differences were found between MIs and CIs for any type of change, and no significant differences were found between types of change (p > .3 for all comparisons)."

Here, the no-blank version of the experiment is represented as a horizontal line.

What they're saying here is

Different kinds of measurements require different significance tests.

Broadly speaking, there are two classes of significance tests:

Measures such as the t-test or z-test are the classic parametric measures of significance.

But for non-parametric distributions, for example many kinds of token frequency data, we can't use them

Another XKCD about significance. For this one you need the original, for the hovertext